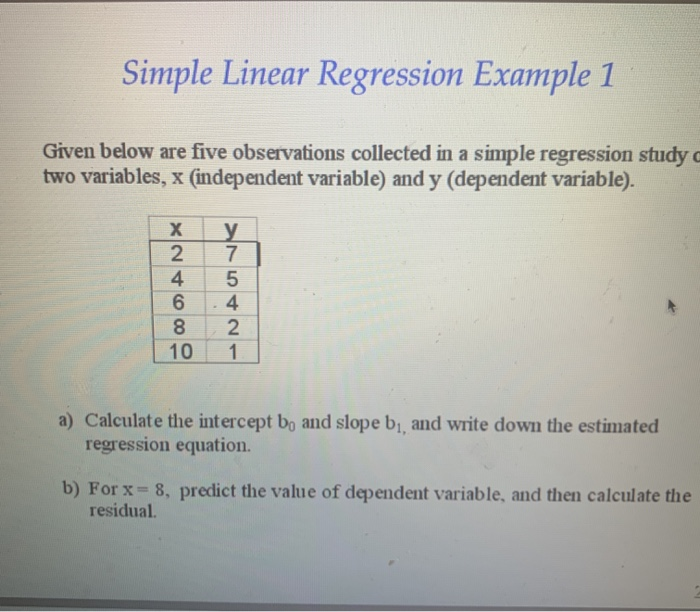

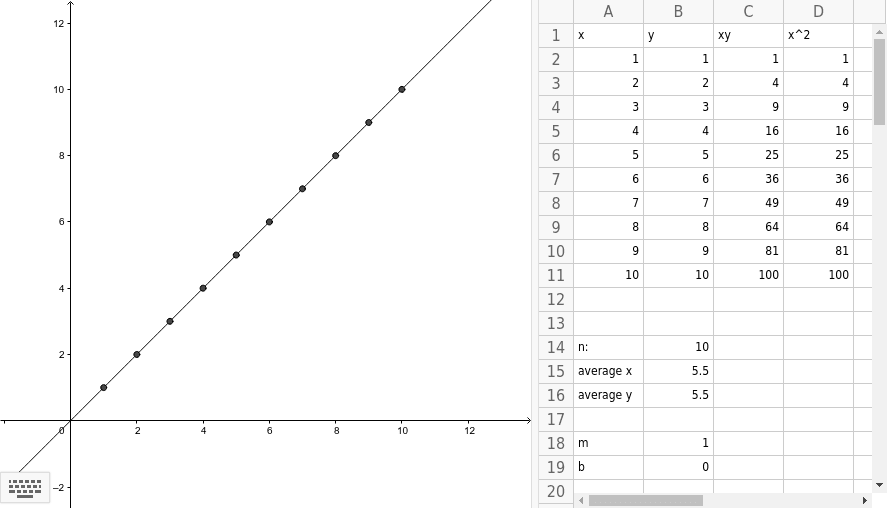

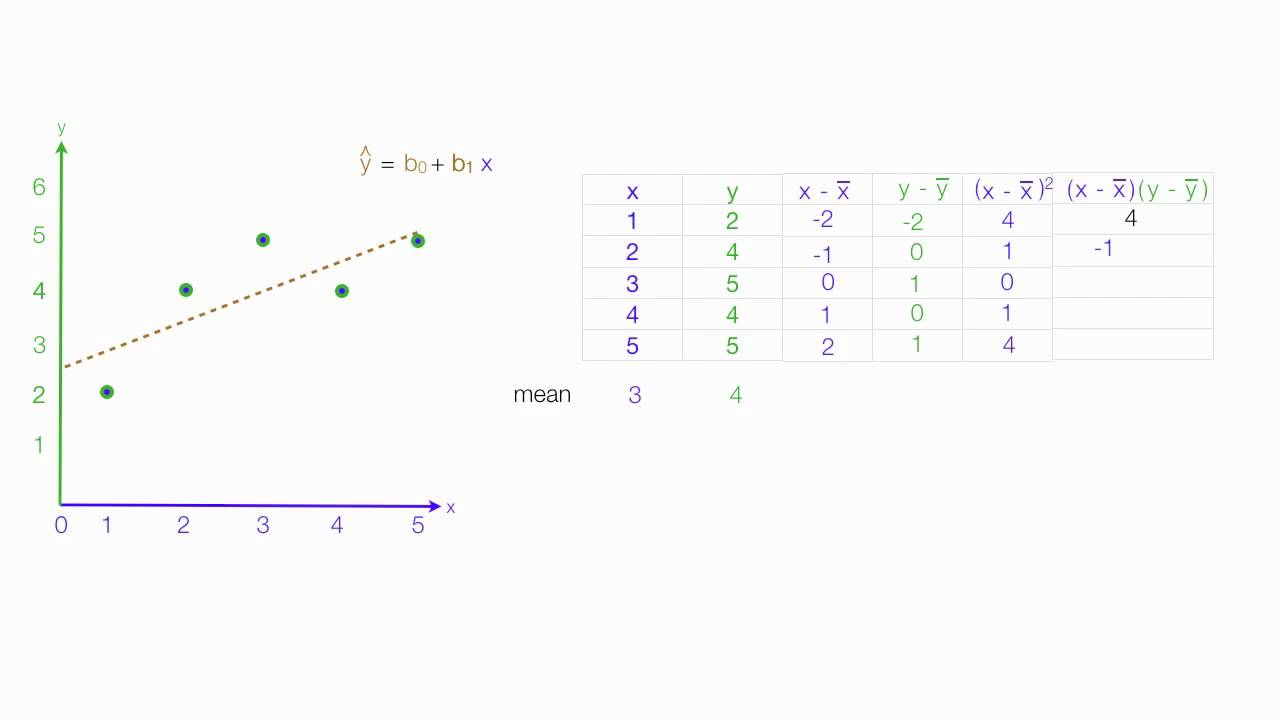

How does the method of least squares help in creating the best-fitting line for a set of data points? This equation is derived by minimizing the sum of the squares of the vertical deviations from each data point to the line (hence, "least squares"). The equation for a least squares regression line is typically expressed as y = a + bx, where 'b' is the slope of the line (calculated as the covariance of x and y divided by the variance of x), and 'a' is the y-intercept (calculated as the mean of y minus 'm' times the mean of x). What is the equation for calculating a least squares regression line and how is it derived? In this case, it's important to organize your data and validate your model depending on what your data looks like to make sure it is the right approach to take. Outliers such as these can have a disproportionate effect on our data. It’s always important to understand the realistic real-world limitations of a model and ensure that it’s not being used to answer questions that it’s not suited for. What are the disadvantages of least-squares regression? The final step is to calculate the intercept, which we can do using the initial regression equation with the values of test score and time spent set as their respective means, along with our newly calculated coefficient. The second step is to calculate the difference between each value and the mean value for both the dependent and the independent variable. When calculating least squares regressions by hand, the first step is to find the means of the dependent and independent variables.

How do you calculate a least squares regression line by hand? If we wanted to know the predicted grade of someone who spends 2.35 hours on their essay, all we need to do is swap that in for X. Now we have all the information needed for our equation and are free to slot in values as we see fit. Slotting in the information from the above table into a calculator allows us to calculate b, which is step one of two to unlock the predictive power of our shiny new model: If we do this for the table above, we get the following results: The symbol sigma ( ∑) tells us we need to add all the relevant values together. Let's remind ourselves of the equation we need to calculate b. By squaring these differences, we end up with a standardized measure of deviation from the mean regardless of whether the values are more or less than the mean. In this section, we’ll describe the method of calculating the linear regression between any two data sets.You should notice that as some scores are lower than the mean score, we end up with negative values. When using Linear Regression, always validate the assumptions and evaluate the model's performance using appropriate metrics, such as the coefficient of determination (R-squared), residual analysis, and cross-validation. The error terms should be normally distributed.

The variance of the error terms should be constant across all levels of the independent variable. In cases of time series or spatial data, other techniques may be more suitable. Independence: The observations should be independent of each other. If the relationship is nonlinear, other methods may be more appropriate. The relationship between the independent and dependent variables must be linear. While Linear Regression is a powerful and widely used statistical technique, it's essential to consider its assumptions and limitations:

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed